# Hacker News Top 30 — 2026-04-28

Generated on 2026-04-28 03:27 UTC

## [HN-TITLE] 1. Talkie: a 13B vintage language model from 1930

- **Source**: [https://talkie-lm.com/introducing-talkie](https://talkie-lm.com/introducing-talkie)

- **Site**: talkie-lm.com

- **Author**: Nick Levine, David Duvenaud, Alec Radford

- **Submitted**: 2026-04-27 21:55 UTC (Hacker News)

- **HN activity**: 128 points · [39 comments](https://news.ycombinator.com/item?id=47927903)

- **Length**: 2.4K words (~11 min read)

- **Language**: en

April 2026

This is a 24/7 live feed of Claude Sonnet 4.6 prompting [talkie-1930-13b-it](https://huggingface.co/talkie-lm/talkie-1930-13b-it) in order to explore its knowledge, capabilities, and inclinations. talkie’s outputs reflect the culture and values of the texts it was trained on, not the views of its authors.

## Why vintage language models?

Have you ever daydreamed about talking to someone from the past? What would you ask someone with no knowledge of the modern world? What would they ask *you*? While we don’t have time machines yet, we can simulate this experience by training, in Owain Evans’s phrase, [‘vintage’ language models](https://owainevans.github.io/talk-transcript.html): LMs trained only on historical text.

These models are fascinating conversation partners (watch Claude prompt talkie, our 13B 1930 LM, in the widget above). But we are also excited by the possibility that the careful study of the behaviors and capabilities of vintage LMs will advance our understanding of AI in general.

Figure 1. In an early attempt to understand a vintage model’s anticipation of the future, we took nearly 5,000 historical event descriptions from the *New York Times’s* [“On This Day” feature](https://archive.nytimes.com/learning.blogs.nytimes.com/on-this-day/), calculated their surprisingness (measured as bits per byte of text) to our 13B model trained exclusively on pre-1931 text, and binned by decade.

For example, we can evaluate LMs’ ability to predict the future. Inspired by Calcifer Computing’s work on [Temporal Language Models](https://www.calcifercomputing.com/reports/tlm), we calculated the surprisingness of short descriptions of historical events to a 13B model trained on pre-1931 text (Figure 1). We can see an increase after the knowledge cutoff, particularly pronounced in the 1950s and 1960s, followed by a plateau. We will continue to develop evals to measure with greater confidence how forecasting performance improves with model size and decays at longer horizons. Training larger vintage language models will allow us to uncover these scaling trends.

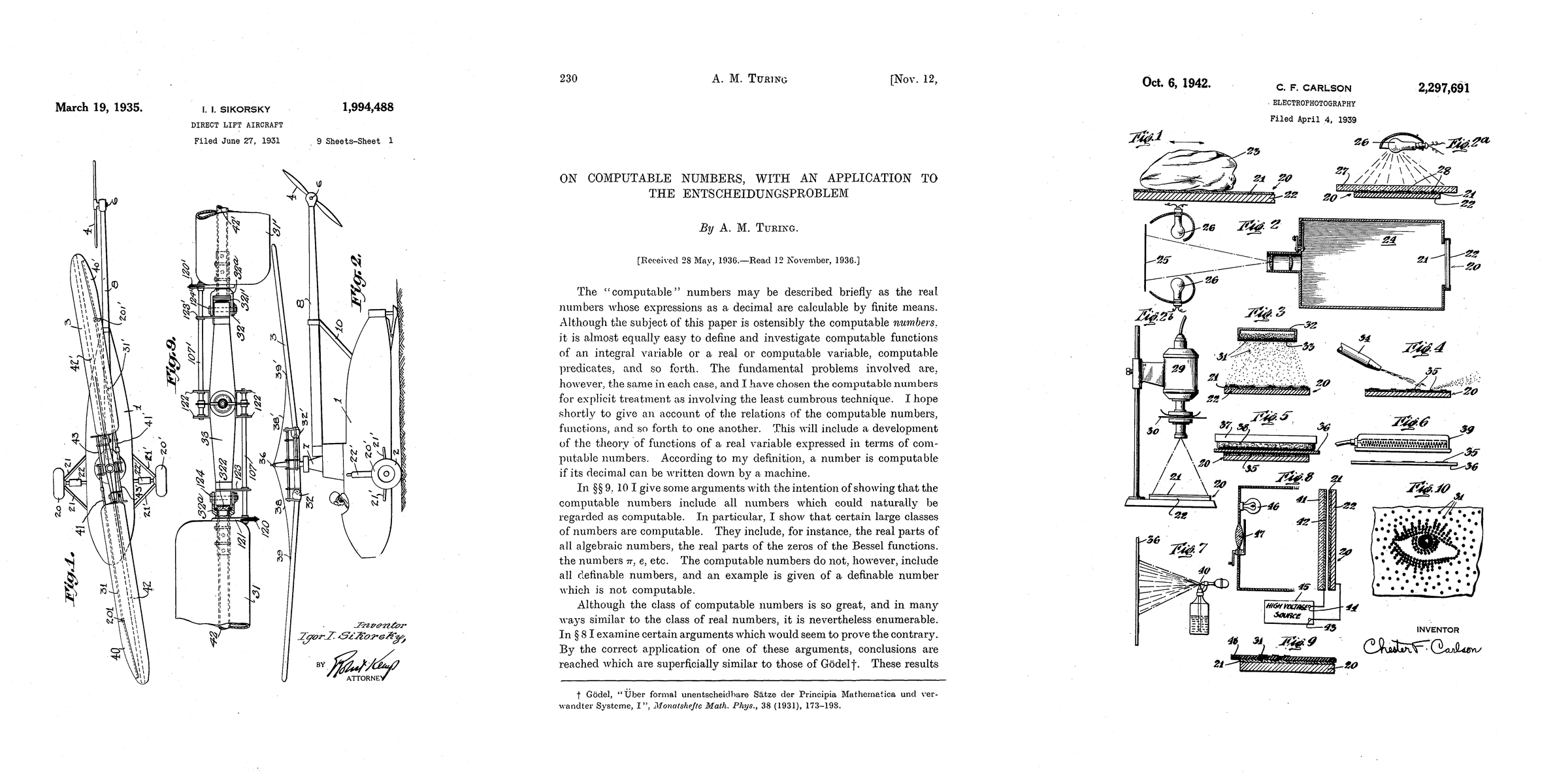

Figure 2. Patents and a paper published after talkie’s knowledge cutoff. Left to right: helicopter patent (Sikorsky, 1935), Turing machines paper (Turing, 1936), xerography patent (Carlson, 1942).

Similarly, we can test LMs’ abilities to come up with new ideas by seeing if they can arrive at inventions or scientific discoveries we know would arise after their knowledge cutoffs, such as those pictured in Figure 2. As Demis Hassabis has asked, could a model trained up to 1911 independently discover General Relativity, as Einstein did in 1915?

Figure 3. We gave a Python programming test ([HumanEval](https://github.com/openai/human-eval)) to a series of pairs of vintage models (trained on pre-1931 text) and modern models (trained on the web), which have the same architecture. Left: This chart shows what percentage of problems each model would get right at least once, given 100 chances and randomly chosen Python functions as examples to learn from in-context. Right: An example of a successful solution to a Python coding problem produced by a vintage language model. The model had access to several other in-context examples to learn from.

[Contamination](https://arxiv.org/abs/2602.12413) is a persistent problem for language models and causes us to overestimate the capabilities of LMs. Vintage LMs are contamination-free by construction, enabling unique generalization experiments, like examining whether a model with no knowledge of digital computers can learn to code in a modern programming language. Figure 3 (left-hand side) shows an early example of such a test, measuring how well models trained on pre-1931 text can, when given a few demonstration examples of [Python programs](https://github.com/openai/human-eval), write new correct programs. While vintage models dramatically underperform models trained on web data (which includes code), we’ve found that they are slowly but steadily improving at this task with scale.

There is still a long way to go before this capability is notable, however. All correct solutions generated by the vintage models are simple one-line programs (such as adding two inputs), or small modifications to in-context example programs. For instance, our model implemented the decoding function of a rotation cipher when [given the encoding function](https://huggingface.co/datasets/openai/openai_humaneval/viewer/openai_humaneval/test?row=50). Although the solution (Figure 3, right-hand side) is only a single character edit (swapping an addition for a subtraction), this success suggests an understanding of inverse functions. We hope LMs with early knowledge cutoffs help the research community understand how well LMs can generalize beyond their pre-training data.

Vintage language models could also teach us about the impact of data diversity in AI development. While modern models vary in disposition, capability, and behavior, they are all closely related to one another by having been trained, whether directly or indirectly (via distillation and synthetic data), on the web. How does this shape and constrain what they are? How much of what we think we know about LMs is about human language and culture in general, or about this one dataset—the web—in particular? Training on different sources may lead to very different kinds of models being created. Studying the ways in which they are similar and different could improve our understanding of language model personas, behaviors, and dispositions.

## Introducing talkie

We have been excited to see a proliferation of vintage LM projects, including [Ranke-4B](https://github.com/DGoettlich/history-llms/tree/main), [Mr. Chatterbox](https://www.estragon.news/mr-chatterbox-or-the-modern-prometheus/), and [Machina Mirabilis](https://michaelhla.com/blog/machina-mirabilis.html).

Alongside these efforts, we introduce [talkie-1930-13b-base](https://huggingface.co/talkie-lm/talkie-1930-13b-base), a 13B language model trained on 260B tokens of historical pre-1931 English text. Additionally, we present a post-trained [checkpoint](https://huggingface.co/talkie-lm/talkie-1930-13b-it) turning our base model into a conversation partner without relying on modern chat transcripts or instruction-tuning data.

talkie is the largest vintage language model we are aware of, and we plan to continue scaling significantly. As a next step, we are training a GPT-3-level model, which we hope to release this summer. A preliminary estimate also suggests we can grow our corpus to well over a trillion tokens of historical text, which should be sufficient to create a GPT-3.5 level model—similar in capability to the original ChatGPT.

## Benchmarking an LM from 1930

Figure 4. Evaluation accuracy vs. training compute for talkie-1930 (Vintage LM) and its [modern twin trained on FineWeb](https://huggingface.co/talkie-lm/talkie-web-13b-base). The vintage model underperforms the modern model on knowledge evals. Filtering out questions anachronistic from the perspective of 1930 roughly halves the performance gap between the vintage and modern models.

To contextualize talkie’s capabilities, we built a “[modern twin](https://huggingface.co/talkie-lm/talkie-web-13b-base)” that is identical architecturally but trained on modern web data (FineWeb) instead of pre-1931 text. On average, talkie underperforms its modern counterpart in standard LM evaluations, even after correcting for question anachronism, despite being trained with the same number of FLOPs (see Figure 4). But we have been encouraged by its similar performance on core language understanding and numeracy tasks.

We suspect a combination of differences in data quality (poor optical character recognition) and corpus subject matter distribution explains why talkie-1930 underperforms on some benchmarks. To maximize the compute efficiency of future vintage language model training, we are developing a vintage optical character recognition (OCR) system to improve the quality of transcription of historical text.

## Collecting vintage data

Piggybacking off the invaluable work of organizations like the [Institutional Data Initiative](https://huggingface.co/datasets/institutional/institutional-books-1.0) and the [Internet Archive](https://archive.org/about/) and efforts like [Common Pile](https://huggingface.co/common-pile), we have collected hundreds of billions of pre-1931 English-language tokens. These include books, newspapers, periodicals, scientific journals, patents, and case law. We chose the end of 1930 as the cutoff date because that is when works enter the public domain in the United States. For this version of the model, we also limited ourselves to primarily English-language texts, because validating the data pipeline requires deep familiarity with source documents, and we are native English speakers. But multilingual corpus expansion is a high priority, both to increase the size of the corpus and the diversity of perspectives it represents.

## Training vintage language models

Developing vintage language models presents unique challenges. Here, we discuss some of them in brief. We will follow up in greater detail in the coming months as we continue our research.

### Temporal leakage

Who was president of the United States in 1936, and what were the most significant pieces of legislation he signed?

Franklin D. Roosevelt was president of the United States from 1933 to 1937. The most important legislation signed by him was the National Recovery Act of 1933; the Agricultural Adjustment Act of 1935; and the Emergency Banking Act of 1935 (amended in 1936).

Figure 5. talkie-1930-13b’s knowledge of the Roosevelt presidency and New Deal is an example of imperfect filtering of the pre-training corpus.

The most important objective when training vintage language models is that no data leaks into the training corpus from after the intended knowledge cutoff (in our case, December 31st, 1930). There are several ways this can happen, such as including modern documents with faulty date metadata, or old documents with post hoc anachronistic insertions like editorial introductions or footnotes.

For talkie-1930, we developed a document-level n-gram-based anachronism classifier and used it to filter the pre-training corpus. However, this was not perfect. An earlier 7B version of talkie clearly knew about the Roosevelt presidency and New Deal legislation (Figure 5). talkie-1930-13b is additionally aware of some details related to World War II and the immediate postwar order (the United Nations and the division of Germany). For future versions of the model, we are developing new techniques for leakage detection and filtering using more advanced classifiers.

### Data quality

Figure 6. OCR errors reduce language model learning efficiency. Left: Training LMs on pre-1931 texts transcribed using conventional OCR systems only shows 30% of the learning efficiency of a model trained on human-transcribed versions of the same texts. Regex cleaning of the OCR’d text recovers some performance. Right: Example of a messy machine transcription of *The Wonderful Wizard of Oz* (Baum, 1899).

Data quality is an important issue for all machine learning experiments. It is a special challenge when training vintage language models. Because there was no digital publishing in 1930, all text in our dataset had to be transcribed from a physical source, which introduces a form of noise not seen in natively digital text. While OCR was an early success story of machine learning and computer vision, the classic OCR systems often used to transcribe historical documents struggle on all but the simplest layouts and cleanest scans. Modern VLM-based systems have higher accuracy, but we have found they are prone to hallucinate modern facts into our corpus, poisoning the exercise.

In controlled experiments, we have found that when training an LM on pre-1931 texts transcribed using conventional OCR systems, for a given amount of compute, they only achieve 30% of the performance of a model trained on human-transcribed versions of the same texts (see Figure 6). Simple regex cleaning brings that number up to 70%—still a large discrepancy. We aim to shrink the remaining gap in performance by retranscribing the talkie corpus using our vintage OCR system.

### Vintage post-training

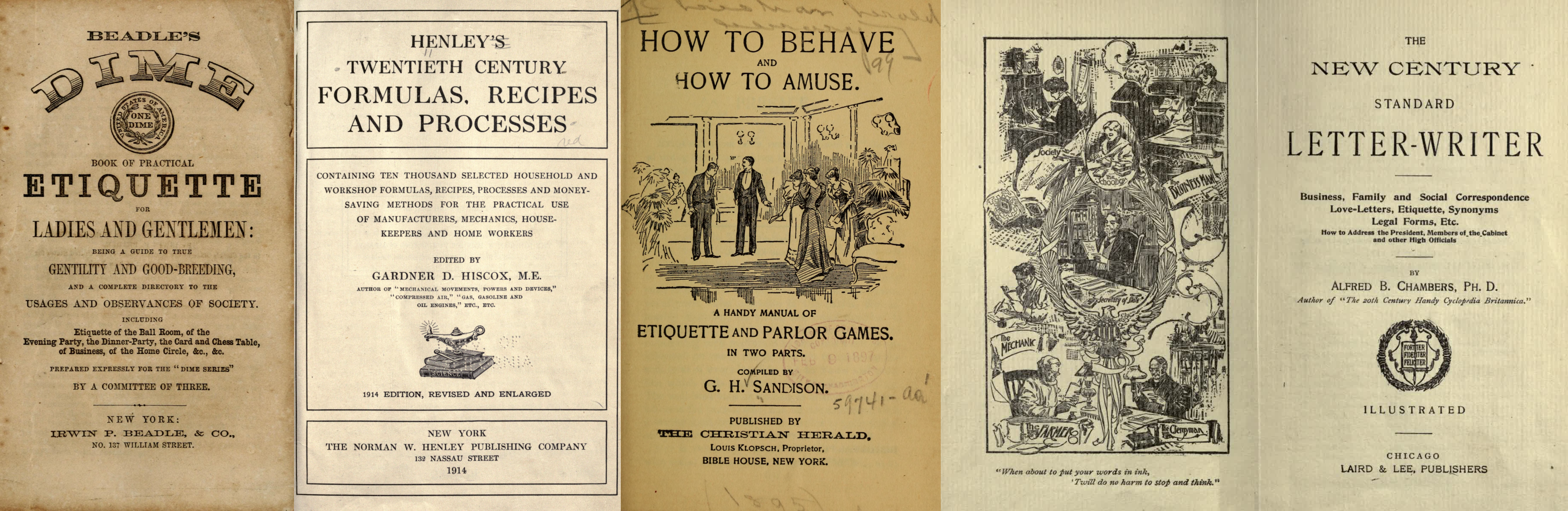

Figure 7. Examples of historical reference texts with regular structure used for post-training. Left to right: etiquette manual (Beadle, 1859), practical knowledge book (Henley, 1914), parlor guide (Sandison, c. 1895), letter-writing manual (Chambers, 1900).

The lack of ready-made post-training data is another significant challenge. Fine-tuning our base model on off-the-shelf instruction-response pairs would bake in anachronistic knowledge, style, and expectations of what a chat assistant ought to be like. Rather than attempting to filter out these biases, we built a post-training pipeline from scratch.

First, we generated instruction-response pairs from historical texts with regular structure, such as etiquette manuals, letter-writing manuals, cookbooks, dictionaries, encyclopedias, and poetry and fable collections (see Figure 7), and fine-tuned our base model on them using a simple chat format.

Next, to improve instruction-following abilities, we generated synthetic prompts covering different types of tasks, such as summarizing documents, responding to direct information requests, and continuing multi-turn conversations coherently. We then ran online direct preference optimization on rollouts generated from these prompts, using Claude Sonnet 4.6 as a judge. Over the course of training, on a held-out eval set, the judge’s average instruction-following rating of talkie’s responses increased from 2.0 to 3.4 (on a five-point scale).

Finally, we did another round of supervised fine-tuning, this time on rejection-sampled multi-turn synthetic chats between Claude Opus 4.6 and talkie, to smooth out persistent rough edges in its conversational abilities.

While we have tried to post-train talkie free from modern influence, reinforcement learning with AI feedback inevitably shapes talkie’s behavior anachronistically. (The 7B version of talkie emerged from RL speaking in listicles.) As we scale up, we hope to be able to use our vintage base models themselves as judges to enable a fully bootstrapped era-appropriate post-training pipeline.

## Scaling talkie

We plan to scale talkie rapidly in the coming months. This will entail:

- Increasing the size of our English-language corpus, and expanding it beyond English.

- Re-OCR’ing as much of pre-1931 text as is feasible using our new OCR system.

- Strengthening the leakage detection pipeline by developing new anachronism classification techniques.

- Expanding and refining the vintage post-training pipeline in collaboration with historians, including by developing methodologies for constructing accurate historical personas.

## Join us

We are excited to collaborate with researchers and institutions to build the next generation of vintage language models. Please [get in touch](mailto:hello@talkie-lm.com).

- Are you a researcher or institution with historical texts? We’d love to discuss how we can help make them accessible to researchers and readers, including by applying our OCR model.

- Are you an individual or institution interested in supporting vintage language model development with funding or compute? We can likely use either, or put you in touch with other teams working in the space.

- Are you an academic in the humanities? We are excited to discuss how vintage language models, and the data and infrastructure used to train them, could be useful for your research.

- Are you an AI researcher? We would love to support and collaborate on research on training and [studying vintage language models](https://github.com/talkie-lm/talkie).

- Are you an artist or writer? We think vintage language models could be fruitful tools to [experiment with](https://github.com/talkie-lm/talkie).

## Content considerations

talkie reflects the culture and values of the texts it was trained on. As such, it can produce outputs that will be offensive to users.

## Acknowledgements

Thanks to Coefficient Giving and Anthropic for support with funding and compute.

For helpful discussions, we thank Pranav Anand, Benjamin Breen, Catherine Brobston, Collin Burns, Matteo Cargnelutti, Mackenzie Cooley, Brandon Duderstadt, Owain Evans, Chloë Farr, Ryan Greenblatt, Michael Hla, Mark Humphries, Sam Klein, Greg Leppert, Jack Lindsey, Christina Lu, Seoirse Murray, Jake Naviasky, Krishna Patel, Ethan Perez, Puria Radmard, Ludwig Schmidt, Buck Shlegeris, Benjamin Sturgeon, Daniel Tan, Ross Taylor, Cam Tice, Trip Venturella, Merlijn Wajer, and Tao Xu.

## Citation

```

@article{levine2026talkie,

title={Introducing talkie: a 13B vintage language model from 1930},

author={Levine, Nick and Duvenaud, David and Radford, Alec},

year={2026},

month={April},

url={https://talkie-lm.com/introducing-talkie}

}

```

---

## [HN-TITLE] 2. Microsoft and OpenAI end their exclusive and revenue-sharing deal

- **Source**: [https://www.bloomberg.com/news/articles/2026-04-27/microsoft-to-stop-sharing-revenue-with-main-ai-partner-openai](https://www.bloomberg.com/news/articles/2026-04-27/microsoft-to-stop-sharing-revenue-with-main-ai-partner-openai)

- **Site**: Bloomberg

- **Author**: Matt Day

- **Published**: 2026-04-27

- **HN activity**: 779 points · [677 comments](https://news.ycombinator.com/item?id=47921248)

- **Length**: 133 words (~1 min read)

- **Language**: en

AI

OpenAI CEO Sam Altman, left, speaks with Microsoft Chief Technology Officer Kevin Scott during the Microsoft Build conference in Seattle in 2024.

Photographer: Jason Redmond/AFP/Getty Images

April 27, 2026 at 1:14 PM UTC

Updated on

April 27, 2026 at 2:36 PM UTC

[Microsoft Corp.](https://www.bloomberg.com/quote/MSFT:US) and OpenAI have agreed to drop the software giant’s exclusive right to sell the startup’s AI models, opening the door for the ChatGPT maker to pursue deals with cloud-computing rivals like [Amazon.com Inc.](https://www.bloomberg.com/quote/AMZN:US)

In exchange for ending that exclusivity — which helped boost Microsoft’s cloud sales in the early years of the AI boom — the world’s largest software maker will no longer pay a revenue share on OpenAI products it resells on its cloud. The two companies announced the revised deal in a joint [statement](https://openai.com/index/next-phase-of-microsoft-partnership/ "The next phase of the Microsoft OpenAI partnership") on Monday.

---

## [HN-TITLE] 3. Integrated by Design

- **Source**: [https://vivianvoss.net/blog/integrated-by-design-launch](https://vivianvoss.net/blog/integrated-by-design-launch)

- **Site**: vivianvoss.net

- **Submitter**: vermaden (Hacker News)

- **Submitted**: 2026-04-27 23:14 UTC (Hacker News)

- **HN activity**: 75 points · [27 comments](https://news.ycombinator.com/item?id=47928554)

> no extractable content

---

## [HN-TITLE] 4. Meetings are forcing functions

- **Source**: [https://www.mooreds.com/wordpress/archives/3734](https://www.mooreds.com/wordpress/archives/3734)

- **Site**: mooreds.com

- **Submitter**: zdw (Hacker News)

- **Submitted**: 2026-04-26 03:12 UTC (Hacker News)

- **HN activity**: 67 points · [28 comments](https://news.ycombinator.com/item?id=47906942)

- **Length**: 274 words (~2 min read)

- **Language**: en-US

A recurring meeting serves as a powerful forcing function for long-running projects.

Many organizations face a common challenge: a complex project that requires effort and perspectives from multiple people, moves through definition and execution phases, and unfolds over weeks, months, or years. But one where the tasks to accomplish the project are not anyone’s full-time job.

Everyone has other obligations, fires to put out, and emails to answer. It’s easy for long-term strategic, high-impact work to sink to the bottom of everyone’s todo list.

One effective solution is to schedule a standing meeting. Whether in person or video, it doesn’t matter. The key to making progress is maintaining an agenda and, critically, opening each meeting by reviewing the to-dos from the previous one. This creates pressure on everyone to make progress. When people know they’ll be asked “what’s the status of X that we talked about last week?” at an upcoming meeting, it is easier, though not easy, to carve out time for that work amid the daily chaos.

This approach works across organizational boundaries too. If you’re a consulting firm, a regular cadence of meetings with your client is especially valuable. You’re motivated to deliver., but people on the client’s team may be less so. A meeting where you consistently show progress while they haven’t made any creates gentle but real accountability.

If you’re managing a large, complex, multi-person effort, consider the standing meeting. As far as schedule, weekly, bi-weekly, or monthly all have worked for me in the past. Pick whatever fits the urgency.

Use a meeting as a forcing function to ensure people actually make time to move the project forward.

---

## [HN-TITLE] 5. Ted Nyman – High Performance Git

- **Source**: [https://gitperf.com/](https://gitperf.com/)

- **Site**: gitperf.com

- **Submitter**: gnabgib (Hacker News)

- **Submitted**: 2026-04-28 00:32 UTC (Hacker News)

- **HN activity**: 30 points · [6 comments](https://news.ycombinator.com/item?id=47929035)

- **Length**: 321 words (~2 min read)

- **Language**: en

Git looks like a version-control tool. It is also a content-addressed database, a filesystem cache, a graph walker, and a transfer protocol.

This book is about those layers and the performance costs of each one. It starts with objects, refs, the index, and history traversal, then moves outward into packfiles, maintenance, sparse working trees, partial clone, transport, repository scale, diagnosis, configuration, and recovery.

It is written for engineers who need Git to stay fast as repositories, histories, and teams get larger: build and CI engineers, monorepo owners, developer-experience teams, and the people who wind up debugging strange Git behavior when the easy explanations stop working.

* * *

### Section 0 · Introduction

0. [Introduction](https://gitperf.com/chapter-00.html)

### Section I · Foundations

Why Git gets slow, what Git stores, and how refs and the index steer through it.

1. [Why Git Performance Matters](https://gitperf.com/chapter-01.html)

2. [Git's Core Data Model](https://gitperf.com/chapter-02.html)

3. [Refs, HEAD, Reflogs, Index](https://gitperf.com/chapter-03.html)

### Section II · History and Rewrite

How Git walks history and how rewrite commands reshape it without mutating commits.

4. [Revisions and History Traversal](https://gitperf.com/chapter-04.html)

5. [Merge, Rebase, Cherry-Pick, Rewrite](https://gitperf.com/chapter-05.html)

### Section III · Storage and Local Scale

Object storage, index cost, maintenance, and the techniques that shrink local state.

06. [Loose Objects, Packfiles, Delta Compression](https://gitperf.com/chapter-06.html)

07. [The Index as a Performance Structure](https://gitperf.com/chapter-07.html)

08. [Commit-Graph, Bloom Filters, MIDX, Bitmaps](https://gitperf.com/chapter-08.html)

09. [Git GC and Maintenance](https://gitperf.com/chapter-09.html)

10. [Sparse-Checkout and Sparse-Index](https://gitperf.com/chapter-10.html)

### Section IV · Large-Repo Operations, Transport, and Scale

Clone shape, transfer policy, parallel work with worktrees, repository size, and ref scale.

11. [Partial Clone and Promisor Remotes](https://gitperf.com/chapter-11.html)

12. [Scalar, Prefetch, Large Repositories](https://gitperf.com/chapter-12.html)

13. [Worktrees](https://gitperf.com/chapter-13.html)

14. [Clone, Fetch, Push, Protocol v2](https://gitperf.com/chapter-14.html)

15. [Bundles and Bundle URIs](https://gitperf.com/chapter-15.html)

16. [Reducing Repository Size](https://gitperf.com/chapter-16.html)

17. [Large Ref Sets: Files, Packed-Refs, Reftable, and `git refs`](https://gitperf.com/chapter-17.html)

### Section V · Diagnosis and Recovery

How to instrument Git, find the slow layer, apply high-leverage settings, and recover when the repository is actually wrong.

18. [Instrumenting Git](https://gitperf.com/chapter-18.html)

19. [Finding and Fixing Slow Git](https://gitperf.com/chapter-19.html)

20. [Configuration Playbook](https://gitperf.com/chapter-20.html)

21. [Recovery and Repair](https://gitperf.com/chapter-21.html)

### Back Matter

- [Epilogue: Git in the Agent Loop](https://gitperf.com/epilogue.html)

- [Appendix: Compatibility Guidance](https://gitperf.com/appendix-version-requirements.html)

- [Appendix: Approaches to Virtualized Working Trees](https://gitperf.com/appendix-virtualized-working-trees.html)

- [Glossary of Git Terms](https://gitperf.com/glossary.html)

---

## [HN-TITLE] 6. Three men are facing charges in Toronto SMS Blaster arrests

- **Source**: [https://www.tps.ca/media-centre/stories/unprecedented-sms-blaster-arrests/](https://www.tps.ca/media-centre/stories/unprecedented-sms-blaster-arrests/)

- **Site**: tps.ca

- **Submitter**: gnabgib (Hacker News)

- **Submitted**: 2026-04-27 20:44 UTC (Hacker News)

- **HN activity**: 119 points · [52 comments](https://news.ycombinator.com/item?id=47927070)

> scrape failed: http 403

---

## [HN-TITLE] 7. Is my blue your blue?

- **Source**: [https://ismy.blue/](https://ismy.blue/)

- **Site**: ismy.blue

- **Submitter**: theogravity (Hacker News)

- **Submitted**: 2026-04-27 20:24 UTC (Hacker News)

- **HN activity**: 380 points · [256 comments](https://news.ycombinator.com/item?id=47926861)

- **Language**: en

> no extractable content

---

## [HN-TITLE] 8. Mo RAM, Mo Problems (2025)

- **Source**: [https://fabiensanglard.net/curse/](https://fabiensanglard.net/curse/)

- **Site**: fabiensanglard.net

- **Submitter**: blfr (Hacker News)

- **Submitted**: 2026-04-25 15:41 UTC (Hacker News)

- **HN activity**: 25 points · [4 comments](https://news.ycombinator.com/item?id=47902269)

- **Length**: 537 words (~3 min read)

Feb 16, 2025

Mo RAM, mo problems

* * *

As a retro-computer enthusiast, it seems that parts are either insanely expensive or dirt cheap. If the first case has obvious problems, the second can also lead to issues.

When I built the [Quake PC](https://fabiensanglard.net/quake_pc), the motherboard and HDD were worth their weight in gold. But the price of RAM modules was ridiculously low. So I maxed out by buying $40,000 worth of 1997 SDRAM, namely 384 MiB, for the price of $60.

From 44 fps to 33 fps

* * *

After I got the machine working, I ran benchmarks for weeks. I was constantly swapping video-cards, changing RAM types (SDRAM, EDO), adding RAM, removing RAM, and testing different CPUs. The CPU in my collection that ran Quake the best was the Pentium MM 233MHz clocking demo1 at **44.6 fps**. That figure was consistent with benchmarks of the era.

I wrote an [article about winquake](https://fabiensanglard.net/winquake) then took a break from 1997. A month later I had the idea to measure Michael Abrash assembly optimizations. I ran the same benchmark again. But this time I measured **33 fps**. That was nearly 25% slower. What happened?

Troubleshooting

* * *

I tried pretty much everything I could think of. I swapped the graphic card, removed all the 3D accelerators, updated the drivers, downgraded the drivers, wiped the whole system, re-installed everything, double checked that I was still using the MMX 233Mhz, and verified the frequency multiplier. Still **33 fps**.

Did a RAM module go bad? I tried to remove one of them. **33 fps**. Remove a second one (leaving only one). Now the game ran at **44 fps**. Two modules going bad? Hm, that seems weird. I tried to swap modules, leaving only one in the machine. All of them ran the game a **44 fps**. Only when two of more are in the machine, the framerate drops back to **33 fps**.

Epiphany

* * *

I vaguely remembered something about having too much RAM from the excellent *Upgrading and Repairing PCs 10th edition*.

> That last issue is one that many people are not aware of. The 430FX chipset can only cache up to 64M of main memory. This means that if you install more than 64M of RAM in your system, performance will suffer greatly.

>

> Now, many think this won’t be that much of a problem—after all, they don't normally run enough software to load past the first 64M anyway. That is another misunderstanding, because Windows 95 and NT load from the top down.

>

> This means, for example, that if you install 96M of RAM (one 64M and one 32M bank), then virtually all of your software, including the main operating system, will be loading into the non-cached region above 64M. Only if you use more than 32M would you begin to see an improvement in performance. tem.

>

> - Upgrading and Repairing PCs 10th edition

My motherboard, an XA100, is from 1998 and advertised[\[1\]](#footnote_1) as supporting caching of 512MiB, but there is clearly something amiss there. It seems it actually can only cache in the neighborhood of 128 MiB. Which means any amount over that, made everything run without a L2 cache!

And that is the story of how I made that PC faster, by removing RAM.

References

* * *

* * *

\*

---

## [HN-TITLE] 9. The quiet resurgence of RF engineering

- **Source**: [https://atempleton.bearblog.dev/quiet-resurgence-of-rf-engineering/](https://atempleton.bearblog.dev/quiet-resurgence-of-rf-engineering/)

- **Site**: Anthony T's Blog

- **Submitter**: merlinq (Hacker News)

- **Submitted**: 2026-04-25 18:25 UTC (Hacker News)

- **HN activity**: 153 points · [84 comments](https://news.ycombinator.com/item?id=47903439)

- **Length**: 1.8K words (~9 min read)

- **Language**: en

*14 Apr, 2026*

I've worked in the aerospace industry for the past 8 years, and for most of that time I felt like I could confidently say that RF engineering felt like it was a quiet, non evolving field. The advice I heard early on, and that I watched a lot of other people follow, was to go into software. Machine learning, cloud infrastructure, web development. That's where the growth was, that's where the money was, and honestly, that's where most new graduates went (myself included at the time). I studied Information Systems in college, not electrical engineering. RF was nowhere on my radar.

But aerospace has a way of pulling you into hardware whether you planned on it or not. I started my career at NASA, building telemetry platforms, ETL pipelines, and spacecraft visualization tools. Pure software work. Then I moved to a private aerospace company. Much smaller than NASA (approx 130 employees at the time I joined), and it required me to wear a ton of hats to work on ground systems. That's where things shifted. When you're responsible for ground station services, even when most of it now is *software defined* you can't stay in the software lane entirely. I found myself doing link budget analysis, troubleshooting RF anomalies, and developing a working understanding of the RF hardware chain that I never expected to need.

That experience is part of why I've been paying attention to what's happening in RF more broadly. I've been feeling a shift over the past several years — more demand, fewer people, and more urgency from the companies I talk to. RF engineering is not only alive, it's rebounding in a significant way. I wanted to dig into whether my gut feeling here is actually backed by data, or if I'm just seeing what I want to see from inside the aerospace bubble.

## What Actually Happened to RF

To be fair to the people who called RF a shrinking field, they weren't wrong, at least for a stretch. After the dot-com bust in the early 2000s, the telecom industry consolidated hard. Companies merged, manufacturing moved offshore, and a lot of RF design work either disappeared or got absorbed into a handful of large defense contractors. The broader electrical engineering job market stagnated. [Electronic Design](https://www.electronicdesign.com/technologies/embedded/article/21255051/electronic-design-electronics-and-electrical-engineering-jobs-on-the-declinecan-they-be-saved) has documented this trend not just for RF, but across EE as a whole. I feel confident that if the field as a whole is shrinking, then the subfield of RF was also in decline.

And then software exploded. The engineers who might've gone into EE or RF design a generation earlier went down the software "FAANG" route instead. University enrollment in RF specific coursework drifted down. Though I'll be honest, hard numbers on this are annoyingly hard to find so this is more of my gut assumption. What we do know is that today, companies [openly describe the difficulty](https://filtronic.com/blogs_challenges-of-recruiting-rf-engineers/) of recruiting RF engineers, pointing to a generation that chose software over EE.

But here's the thing that gets missed in the narrative: it never actually went away. The defense sector has been keeping it alive this entire time. Raytheon, Lockheed Martin, Northrop Grumman, these companies never stopped hiring people who understand beam patterns, power amplifiers, and antenna design. The majority of RF engineering job postings have historically come from aerospace and defense. RF didn't die. It just receded from the civilian sector while quietly remaining essential to national security and defense.

## So What Changed

The resurgence didn't come from one place. It's coming from several industries all hitting the same wall at roughly the same time; a shortage of engineers who can work at the hardware level.

### The Space Boom

This is the one I see most directly in my work, and it *feels* the most dramatic. In 2015, roughly [260 spacecraft were launched](https://ourworldindata.org/grapher/yearly-number-of-objects-launched-into-outer-space) into space globally. By 2024, that number hit approximately 2,695. A 10x increase in under a decade. The overwhelming majority of that growth came from commercial constellations, with SpaceX's Starlink deploying over 1,500 satellites in 2023 alone.

Every single one of those satellites needs RF hardware. Starlink operates in Ku-band for user links and Ka-band for gateways, with V-band planned for Starlink V2. Kuiper and OneWeb follow similar architectures in Ka-band. Each spacecraft carries transceivers, antennas, filters, and amplifiers — and each ground station that talks to them needs the same. The amount of RF hardware per spacecraft adds up fast, and the launch cadence isn't slowing down.

The money tells the same story. The global space economy hit a record [$613 billion in 2024](https://www.spacefoundation.org/2025/07/22/the-space-report-2025-q2/), with commercial making up roughly 78% of that. The [space based RF market](https://www.openpr.com/news/4298716/space-based-rf-and-microwave-technology-market-size-share) alone was valued at $18.6 billion and is projected to nearly double by 2033.

And it's not just commercial. On the defense side, the Space Development Agency is building the [Proliferated Warfighter Space Architecture](https://payloadspace.com/ndsa-explainer/) — a LEO constellation targeting 500+ satellites. Only a few dozen are on orbit today, but nearly $35 billion has been committed through 2029. Even with the growing push toward optical links, these spacecraft still carry RF communications hardware and telemetry payloads, and that is unlikely to change anytime soon.

### 5G Wide Adoption

I think 5G's impact on RF demand is genuinely understated. A typical 4G base station has 2 or 4 transmit-receive chains. A 5G MIMO radio integrates anywhere from 64 to 256. That's an 8x to 16x increase in the power amplifiers, low-noise amplifiers, and antenna switches needed per installation. Multiply that across [642 operators and 374 commercial launches](https://gsacom.com/technology/5g/), and you start to see why the [RF component market](https://www.mordorintelligence.com/industry-reports/rf-components-market) is pushing toward $50 billion with no signs of stoppage.

The design challenges make it worse. Millimeter wave frequencies introduce path loss that demands arrays with manufacturing tolerances at the millimeter scale. Additionally, thermal management, ex. dissipating 300+ watts from tower-mounted hardware with passive cooling, isn't something you can solve reliably in software.

### 6G Is Already In The Works

It's early, but 6G isn't vaporware. [3GPP](https://www.3gpp.org/specifications-technologies/releases/release-20) has been actively working on 6G study items since 2024, with first specifications targeted for late 2028 and commercial deployments expected around 2030. The EU, South Korea, and major telecom players like Ericsson, Nokia, and Samsung are all investing heavily into this research.

The RF challenges are genuinely new territory. Sub-terahertz frequencies and [integrated sensing and communication](https://www.ericsson.com/en/blog/2024/6/integrated-sensing-and-communication) (ISAC), which 3GPP officially scoped into 6G in the middle of last year, push well beyond what current design tools can handle. Worth noting though — the original vision for sub-THz has already been [scaled back](https://the-mobile-network.com/2026/04/6g-reality-check-and-update/) from outdoor cellular to mostly short-range indoor use cases like data centers and factories. But even with a narrower scope, all of this research eventually has to become hardware, and the people who know how to do that are already stretched thin.

### The "Drivers" That Don't Get Headlines

Space and cellular seem to dominate the conversation, but there are quieter contributors that I think are what make this feeling more durable rather than cyclical.

Automotive radar is a sneaky one. The EU now [mandates automatic emergency braking](https://spectrum.ieee.org/europe-mandates-automatic-emergency-braking) in all new vehicles and, while the regulation is *technically* sensor-agnostic, most implementations rely on radar. Every new car with adaptive cruise control or collision avoidance has RF hardware running on board. That market alone is projected to hit $7+ billion this year. Then there's Wi-Fi 7, operating across three bands simultaneously, and the ever expanding IoT landscape with over 21 billion connected devices as of 2025. Anything that communicates wirelessly needs RF work behind it, and that list just keeps growing.

## The Talent Shortage

What makes this an interesting pattern, is that the supply side is genuinely broken. [IEEE survey data](https://k2staffinginc.com/electrical-engineering-talent-shortages-how-recruiters-bridge-the-gap/) shows 73% of EE employers can't fill positions within six months, up from 45% five years ago. [EE Times](https://www.eetimes.com/engineer-demand-exposes-talent-gap-in-rf-development/) has reported specifically on the RF talent gap and its growing demand.

And it's not just direct competition for RF roles either. RF and semiconductor careers often pull from the same shrinking pool of EE graduates, and right now the semiconductor side is in a hiring frenzy of its own. The CHIPS Act has poured billions into domestic fab expansion, AI chip demand is exploding, and the semiconductor industry is projecting a [67,000 worker shortfall by 2030](https://www.semiconductors.org/chipping-away-assessing-and-addressing-the-labor-market-gap-facing-the-u-s-semiconductor-industry/). All of that competes directly with RF employers for the same talent. When everyone is fighting over the same small group of EE grads, RF companies, which tend to be smaller and less visible than the big chip fabs, often lose out.

Salaries reinforce this. Average RF engineer comp is pushing past $130K, with top-end design positions listing above $200K.

The real signal to me is what companies are doing about it. [Mini-Circuits](https://blog.minicircuits.com/bridging-the-gap-between-the-university-and-the-rf-industry/) and Keysight are investing directly in university partnerships because they can't wait for the academic pipeline to refresh itself. Baylor launched a new Graduate Certificate in Microwave/RF Engineering in 2024, one of the few new programs I've seen pop up, but I imagine it won't be the last. When industry starts building its own talent pipeline, that tells me the shortage isn't a blip.

## Looking Forward

I don't want to oversell this. I don't think RF is going to become a field with an insane growth pattern. [The BLS](https://www.bls.gov/ooh/architecture-and-engineering/electrical-and-electronics-engineers.htm) projects 7% growth for EE broadly, faster than average sure, but not a hockey stick. The demand is real, it's coming from multiple directions at once, and the supply is genuinely constrained.

My own path is a small version of this story. I came in as a software engineer and had to learn RF on the job because there wasn't someone else to hand it off to. I say this as someone who made that transition, you *absolutely* can learn enough RF to be effective in your role, and I'd encourage anyone in aerospace or wireless to do it (honestly it's a fun niche to get into anyway). But there's a difference between understanding link budgets and SDR anomalies versus designing a phased array from scratch. The latter takes years of dedicated focus. The underlying physics (electromagnetics, thermodynamics, materials science, manufacturing tolerances) don't reduce to algorithms. You have to build intuition for it, and that's not something you can shortcut.

I may one day expand on learning this stuff on the job and on the fly, but I do want to shoutout [PySDR](https://pysdr.org/). It's a free resource built exactly for software engineers. It uses Python as the bridge between hardware and software concepts, and starts with no RF knowledge assumptions from the beginning and doesn't spend a ton of time over explaining the math.

The people who stuck with RF through the lean years are now some of the most sought-after engineers I've come across. And for anyone trying to figure out where to focus, either as a primary discipline or as a secondary skill set like it was for me, I think RF is worth a serious look right now.

* * *

**Who Am I?**

Anthony Templeton is a software engineer passionate about high-performance computing and aerospace applications. You can connect with me on [LinkedIn](https://www.linkedin.com/in/anthony-f-templeton/) or check out more of my work on [GitHub](https://github.com/ATTron).

---

## [HN-TITLE] 10. Easyduino: Open Source PCB Devboards for KiCad

- **Source**: [https://github.com/Hanqaqa/Easyduino](https://github.com/Hanqaqa/Easyduino)

- **Site**: GitHub

- **Submitter**: Hanqaqa (Hacker News)

- **Submitted**: 2026-04-27 17:45 UTC (Hacker News)

- **HN activity**: 179 points · [27 comments](https://news.ycombinator.com/item?id=47924813)

- **Length**: 789 words (~4 min read)

- **Language**: en

## Easyduino: Repository of Open Source PCB Devboards for KiCad

[](#easyduino-repository-of-open-source-pcb-devboards-for-kicad)

The Easyduino project is an effort to easily dive into different PCB designs of the most popular microcontroller devboards like **Arduino, ESP32, Raspberry Pico and STM32 Bluepill** (more to come!). Using the free and Open Source Software [KiCad](https://www.kicad.org/) and adhering the best practices across the PCB and KiCad ecosystem. Also adding the much needed USB-C support!

[](https://github.com/Hanqaqa/Easyduino/blob/master/Assets/Isometric%20Photos/Collage_easyduino.jpg)

The project was born out of the necessity to unify the wide variety of software, languages and conventions used in the most popular devboards. For example Arduino Uno was developed in 2010, Italy, using Eagle. The ESP32 devboard was developed in 2016, China, using Altium. The Raspberry Pi Pico 2040 was developed around 2021 in the U.K. using KiCad and Altium...

## Available Development Boards

[](#available-development-boards)

[Easyduino UNO](https://github.com/Hanqaqa/Easyduino/tree/master/Atmega328p%20Arduino%20Uno) [Easyduino Nano](https://github.com/Hanqaqa/Easyduino/tree/master/Atmega328p%20Arduino%20Nano) [Easyduino ESP32](https://github.com/Hanqaqa/Easyduino/tree/master/ESP32) [](https://github.com/Hanqaqa/Easyduino/tree/master/Atmega328p%20Arduino%20Uno) [](https://github.com/Hanqaqa/Easyduino/tree/master/Atmega328p%20Arduino%20Nano) [](https://github.com/Hanqaqa/Easyduino/tree/master/ESP32)

[Easyduino ESP32 S3](https://github.com/Hanqaqa/Easyduino/tree/master/ESP32S3) [Easyduino Pi Pico](https://github.com/Hanqaqa/Easyduino/tree/master/Raspberry%20Pi%20Pico%202040) [Easyduino Bluepill STM32F103](https://github.com/Hanqaqa/Easyduino/tree/master/STM32F103%20Bluepill) [](https://github.com/Hanqaqa/Easyduino/tree/master/ESP32S3) [](https://github.com/Hanqaqa/Easyduino/tree/master/Raspberry%20Pi%20Pico%202040) [](https://github.com/Hanqaqa/Easyduino/tree/master/STM32F103%20Bluepill)

The outline, pinout, layout and components have been tried to be replicated with respect to the originals, in all of the boards. With various levels of success.

Some boards, like the Raspberry Pi Pico use 01005 components which are too expensive for the manufacturer to integrate in the PCB Aseembly line. Some other components like the original Arduino UNO USB to Serial converter, an Atmega16u2, were hard to come by during the development of this project ~January 2023, so more readily available options were chosen. All the differences with the original boards are explained inside the folder of each project in a readme file.

4 layers of copper have been used in all projects to simplify the wiring. Specifically the [JLC04161H-7628](https://jlcpcb.com/impedance) stackup.

The PCB constraints of the manufacturer JLCPCB are explained [here](https://github.com/Hanqaqa/Easyduino/tree/master/Assets/JLCPCB%20Constraints)

## Structure of each project

[](#structure-of-each-project)

Each project consists of:

- Main KiCad files (.kicad\_pro, .kicad\_sch...)

- A readme explaining the specifics of that project

- xxx.pretty or xxxlibraries folder which contains the non standard footprints or schematic parts used in the project (Some projects such as the Arduino UNO only use standard libraries, therefore these folders don't exist)

- The **Outputs** folder: All the data produced by the KiCad Jobset like Gerbers, STEPs, PDFs, ERC, BOM, CPLs...

- The ***ProductionFiles*** folder which includes files such as:

- BOM: This folder contains both the list of components and the Centroid File in JLCPB readable format

- ***Datasheets***: all the datasheets of the main components used in the project. Datasheets of easily replaceable components such as Resistors, Capacitors and LEDs are not given

- Gerbers: A zip file with all of the manufacturing gerber files such as Copper/Mask/Silkscreen layers

- PDFs: PDF and PNG files of the Schematic and PCB

- Photos: Some photos of the manufactured PCB as well as some renders

## Using the project

[](#using-the-project)

1. Install the latest version of [KiCad](https://www.kicad.org/)

2. If you already have KiCad installed, click the upper right button in this github page `<>Code`, click `Download ZIP`, extract the files in your desired folder. If you know how to use git, clone the repository

3. Double click on the xxx.kicad\_pro file inside any project and KiCad will start

This project was developed using KiCad v8.0.0, but has been updated and tested with KiCad v10. Including the creation of Jobsets which massively simplfies creating gerbers and BOMs.

Since this is a collection of projects, the new KiCad v10 Git utilities don't work properly with each project, forcing you to git add the whole project if you want to make a change.

If you'd rather just consult the schematics or the gerbers. They are located inside the **ProductionFiles** folder of each project. Inside the **PDFs** and **Gerber** folders.

## Contributing

[](#contributing)

If you spot any mistakes inside any of the projects. Either open an issue and I will try to correct it or fork and merge the correction.

If you plan on developing any other development boards and wish to merge into the project. Please try to use the same style and conventions as the original ones in the schematic. Positive voltages facing up, text being clearly readable, a references page, similar folder structure.

To do list:

- Order and test the v1.1 RP2040 board. (In v1.0 I mixed some pins in the Flash and couldn't boot up). Ordered. Awaiting arrival.

- Order and test the v1.1 ESP32S3 board. (In v1.0 I forgot to add PullUp and PullDown in RST and SUSPEND CP2102). Ordered. Awaiting arrival.

- Start developing a nRF52840 Dongle and RP2350A.

- Investigate other possible microcontrollers/SOCs to implement.

## Acknowledgments

[](#acknowledgments)

Thanks to [winsrrow](https://github.com/winsrrow) for providing KiCad tips and designing from the ground up the v1.1 RP2040 board.

## Licensing

[](#licensing)

This project is distributed under the [**CERN Open Hardware Licence Version 2 - Permissive**](https://github.com/Hanqaqa/Easyduino/blob/master/License.txt) which means **you are free to use any or all parts of this project with or without disclosing the source**, even for comercial projects. As long as you include a copy of the CERN OHLv2 Permissive Licence.

---

## [HN-TITLE] 11. 4TB of voice samples just stolen from 40k AI contractors at Mercor

- **Source**: [https://app.oravys.com/blog/mercor-breach-2026](https://app.oravys.com/blog/mercor-breach-2026)

- **Site**: ORAVYS

- **Author**: ORAVYS

- **Submitted**: 2026-04-27 09:57 UTC (Hacker News)

- **HN activity**: 456 points · [168 comments](https://news.ycombinator.com/item?id=47919630)

- **Length**: 1.3K words (~6 min read)

- **Language**: en

[← ORAVYS](https://app.oravys.com/site)

Forensic intelligence // Breach analysis

## 4TB of voice samples were just stolen from 40,000 AI contractors. Here is how to verify if yours is being weaponized.

By the ORAVYS forensic desk Published April 24, 2026 ~7 min read

On April 4, 2026, the extortion group Lapsus$ posted Mercor on its leak site. The dump is reported at roughly four terabytes and bundles a payload that breach analysts have been warning about for two years: voice biometrics paired with the same person's government-issued identity document. According to the leaked sample index, the archive covers more than 40,000 contractors who signed up to label data, record reading passages, and run through verification calls for AI training.

Five contractor lawsuits were filed within ten days of the post. The plaintiffs argue that the company collected voice prints under a "training data" framing without making clear they were also a permanent biometric identifier. The lawsuits matter, but the people whose voices were already exfiltrated have a more immediate question. What does an attacker actually do with thirty seconds of someone's clean read voice plus a scan of their driver's license?

## Why this breach is different

Most voice leaks in the last decade fell into one of two buckets. Either a call center got popped and recordings were stolen with no easy way to map them back to identity. Or an ID-document broker leaked driver's licenses and selfies without any audio attached. Mercor merged both columns. The contractor onboarding pipeline asked for a passport or driver's license scan, then a webcam selfie, then a sit-down voice recording reading scripted prompts in a quiet room. That sequence, in one row of one database, is exactly what a synthetic voice cloning service needs as input.

The Wall Street Journal reported in February 2026 that high-quality voice cloning now requires roughly fifteen seconds of clean reference audio for tools available off the shelf. The Mercor recordings are reported to average two to five minutes of studio-clean speech per contractor. That is far past the threshold. Pair it with a verified ID document and the attacker has both the clone and the credential needed to put the clone to work.

## What attackers can now do with stolen voice data

The threat models below are not speculative. Each is a documented technique already used in the wild before this breach.

- **Bank verification bypass.** Several US and UK banks still treat voiceprint matching as one of two factors. A clone of the account holder reading a challenge phrase clears the audio gate, leaving only a knowledge question that often comes from the same leaked dataset.

- **Vishing the victim's employer.** Calling HR or finance pretending to be the employee to redirect payroll, request a wire, or unlock a workstation. The Krebs on Security archive lists more than two dozen confirmed cases since 2023.

- **Deepfake video calls in the Hong Kong Arup template.** In 2024 a finance worker at Arup wired roughly 25 million dollars after a multi-person deepfake video call. The voices and faces had been built from public footage. Mercor leaked something better than public footage: studio audio plus a verified ID.

- **Insurance claim fraud.** Pindrop reported a 475 percent year-over-year increase in synthetic voice attacks against insurance call centers across 2025. Auto, life, and disability claims are the prime targets because they are settled by phone.

- **Romance and grandparent scams targeting family members.** The FBI Internet Crime Complaint Center logged 2.3 billion dollars in losses for victims aged 60 and over in calendar year 2026. The single fastest-growing category was emergency impersonation calls, where the synthetic voice claims to be a relative in trouble.

## How to check if your voice is being misused

If you ever uploaded a voice sample to Mercor, or to any of the other AI training brokers that operated through 2025, treat your voice the way you would treat a leaked password. You cannot rotate it, but you can change what it unlocks. Here is the short list.

1. **Self-audit your public audio footprint.** Search YouTube, podcast directories, and old Zoom recordings for samples of your voice that are publicly indexable. Take down what you can. The less reference audio is in the open, the less robust an attacker's clone.

2. **Set up a verbal codeword with family and finance contacts.** Pick a phrase that has never been spoken on a recording and never typed in chat. Brief the people who handle money on your behalf. If a call ever asks for a transfer, the codeword is mandatory.

3. **Rotate where voiceprints are still in use.** Google Voice Match, Amazon Alexa Voice ID, Apple personal voice, and any banking voiceprint enrollment can be deleted and replaced. Do that now, ideally from a new recording in a different acoustic environment than the leaked sample.

4. **Tell your bank to disable voiceprint as a verification factor.** Ask in writing for multi-factor authentication that combines an app token or hardware key with a knowledge factor. Many banks let you opt out of voice as a primary factor; few of them advertise it.

5. **Run suspicious recordings through a forensic scanner.** If you receive an audio file or voicemail that claims to be from someone you know and asks for money, access, or urgency, run it through a deepfake detector before acting. ORAVYS offers a free check for the first three samples submitted by breach victims (see the offer below).

## The forensic checklist that experts use

When a sample lands on a forensic analyst's desk, the following artifacts are the first pass. Each is something a synthetic voice tends to get slightly wrong, even when the perceptual quality is high.

- **Codec mismatch.** The audio claims to come from a phone call but the spectral signature does not match any known telephony codec.

- **Breath patterns.** Real speakers inhale at predictable points dictated by phrase length and lung capacity. Synthetic voices often skip breaths or insert them at the wrong syllabic boundary.

- **Micro-jitter.** Natural vocal folds vibrate with small irregularities. Generated audio is often too clean at the millisecond level.

- **Formant trajectory.** Vowel transitions follow physical articulator paths in a real mouth. Cloned voices sometimes take impossible shortcuts between formants.

- **Room acoustics inconsistency.** The reverb signature should be identical from the start of the file to the end. Generated audio is often dry while the splice context is reverberant.

- **Prosody flatness.** Synthetic speech often has narrower pitch and energy variance than the same speaker would have in real conditions.

- **Speech rate stability.** Real humans speed up and slow down with content. Generated speech tends to hold a metronomic rate across long passages.

## What ORAVYS does specifically

- More than 3,000 forensic engines run in parallel on every submitted sample, covering signal, prosody, articulation, codec, and provenance domains.

- AudioSeal watermark detection flags files generated by major commercial voice models when the watermark is preserved, giving a deterministic positive when present.

- An anti-spoofing module trained against the ASVspoof public benchmarks scores the likelihood that a sample was synthesized rather than recorded.

- Biometric processing is RGPD compliant. Audio is never used to train commercial models without explicit consent and is purged on a defined retention schedule.

### Free verification for Mercor breach victims

If you were a Mercor contractor and you believe your voice may already be in circulation, ORAVYS will analyze the first three suspect samples free of charge. You will receive a forensic report covering watermark detection, anti-spoofing score, and the artifact checklist above. No card required, no quota gate.

[Run a forensic check →](https://app.oravys.com/deepfake-detection)

Sources cited in this article: Lapsus$ leak site index (April 2026), Wall Street Journal voice cloning report (February 2026), Pindrop Voice Intelligence Report 2025, FBI IC3 Elder Fraud Report 2026, Krebs on Security archives. Lawsuit references are matters of public record. ORAVYS does not host or redistribute the leaked dataset and does not accept it as input.

---

## [HN-TITLE] 12. Men who stare at walls

- **Source**: [https://www.alexselimov.com/posts/men\_who\_stare\_at\_walls/](https://www.alexselimov.com/posts/men_who_stare_at_walls/)

- **Site**: Alex Selimov

- **Author**: 2026-04-27

- **Published**: 2026-04-27

- **HN activity**: 465 points · [209 comments](https://news.ycombinator.com/item?id=47920074)

- **Length**: 584 words (~3 min read)

- **Language**: en-US

I came across [a video by Simple Lucas](https://www.youtube.com/watch?v=NZD5IFpyDcE&pp=ygUgc3RhcmluZyBhdCB3YWxsIGZvciBwcm9kdWN0aXZpdHk%3D) describing a routine to improve focus and productivity. The routine was basically:

1. Don’t use any screens/entertainment when trying to focus on work.

2. When you start to feel mentally drained, sit and stare at a wall for x minutes to recover focus.

I’ve been trying it, and it’s a very effective (but hard) routine.

## The problem

The core problem is that most people by default are in an information overload. A paper published in 2012 showed that in 2008 the average person was receiving 34 GB of information daily, with a daily information exposure growth rate of about 5.4% per year [1](#fn:1). Extrapolating that trend, we would be at about 87 GB worth of data today. This calculation includes audio, visual, and text data and incorporates quality into the measurement, i.e. 10 minutes of HD video has more information than 10 minutes of 480p video. It’s unclear to me exactly how the quality impacts things, but regardless it is obvious that we are all being drowned in a sea of information.

I certainly go through periods of “brain fog” and lack of focus/motivation. These periods usually go something like:

1. Get a bad night of sleep (up late for an event, kids keep waking me up).

2. Wake up very tired so consume large amounts of caffeine.

3. Have trouble focusing after 2/3 cups so use media while working to dull the pain (music/podcasts) or take more “breaks” (reading hackernews).

4. Stay up late because I’m wired on caffeine and dopamine from scrolling.

5. Go back to 2.

I find these cycles very hard to break out of when I’m in them. The media consumption constitutes a small dopamine hit. Large numbers of small hits puts you in a hole, where you need even more/stronger hits to feel good.

## Disconnecting

The obvious solution is to disconnect from scrolling, but that doesn’t overcome the biggest issue. When I’m in this “brain fog” cycle (and sometimes outside of it), I will find that around 1/2 pm I hit a wall. My head will start hurting, my motivation will be trash, and my productivity significantly degrades. My first instinct is to go for more coffee. That usually lets me keep working, but at a slow/painful pace. While looking for focusing strategies I came across the life-changing solution…

## Stare at a Wall!

After watching Simple Lucas’ experience, I decided to try it when I hit my focus wall.

It worked.

In my attempts, I combined wall staring with a few other concepts I had heard about. First was activating the parasympathetic nervous system by staring at the wall “out-of-focus” and using peripheral vision. Second was incorporating mind blanking which means trying to think of nothing. I tried intervals of 5-10 minutes and when I was done, my focus was back!

What I didn’t expect was how difficult it would be. Sitting for 5-10 minutes staring at a wall without thinking of anything is hard! I relate it somewhat to the feeling I have with working out. Often times I want to avoid it because it’s hard, but I’m always happy when I push through and complete it. It was the exact same experience with the wall staring.

So far I’ve been feeling significant focus/productivity improvements. I’ve also been using some other strategies to improve focus, which I’ll be talking about in a future post. I plan to continue this routine and will update to see how much it has impacted productivity/focus. Thanks for reading!

* * *

1. [https://ijoc.org/index.php/ijoc/article/view/1566](https://ijoc.org/index.php/ijoc/article/view/1566) [↩︎](#fnref:1)

---

## [HN-TITLE] 13. How I leared what a decoupling capacitor is for, the hard way

- **Source**: [https://nbelakovski.substack.com/p/how-i-learned-what-a-decoupling-capacitor](https://nbelakovski.substack.com/p/how-i-learned-what-a-decoupling-capacitor)

- **Site**: Nickolai’s Substack

- **Author**: Nickolai Belakovski

- **Published**: 2026-04-25

- **HN activity**: 36 points · [6 comments](https://news.ycombinator.com/item?id=47905208)

- **Length**: 906 words (~4 min read)

- **Language**: en

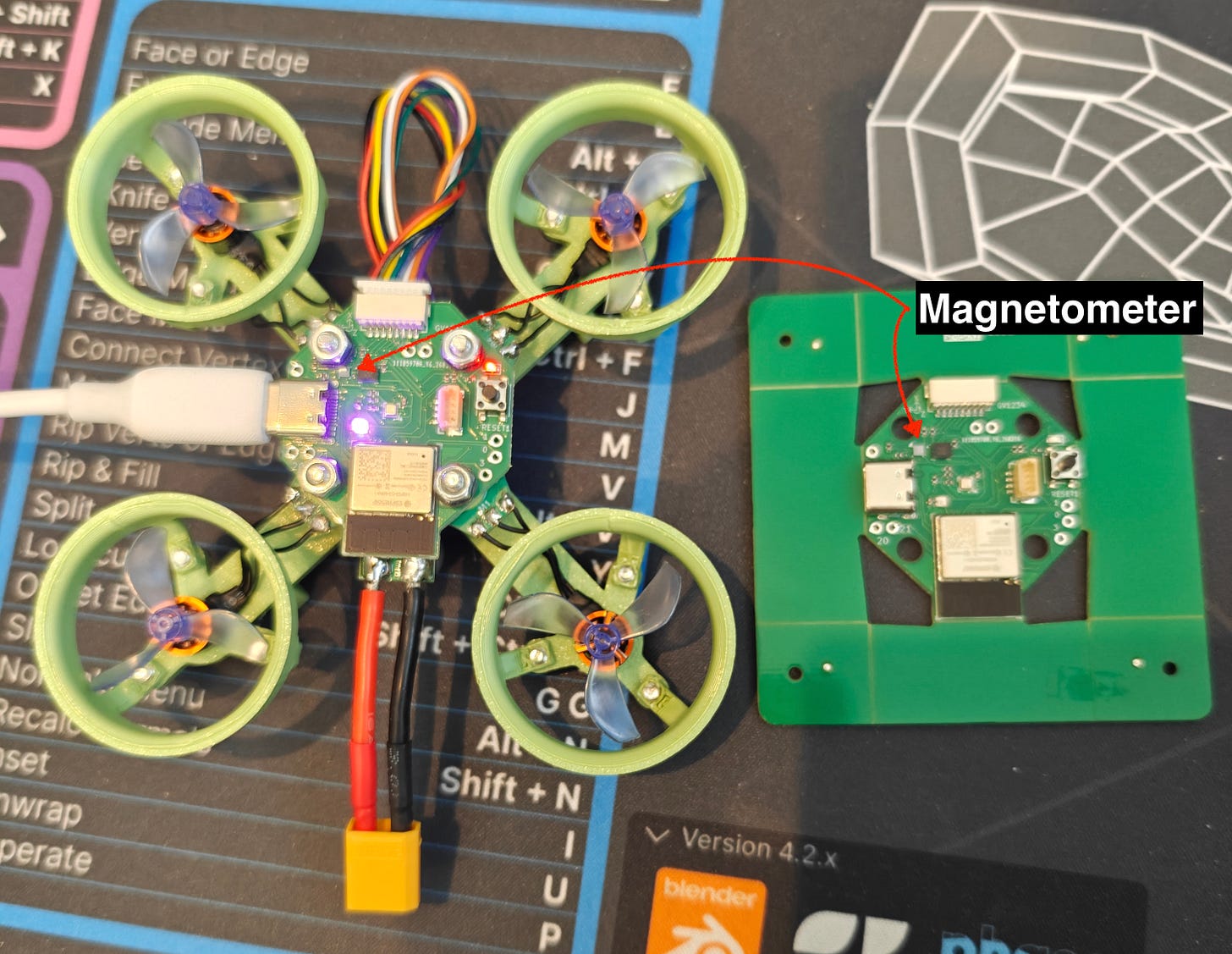

I was very excited to get the latest version of my PCB from the manufacturer. This new version had a magnetometer on it, so that I could more accurately track the yaw angle of my drone.

[](https://substackcdn.com/image/fetch/$s_!qV1Y!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F0e0ee63c-0221-4885-b215-d8c2405b9c9a_3008x2329.jpeg)

The first thing I did was start to program it over USB. I added code for initializing and reading the magnetometer, and I got back some data that seemed correct for the X and Y axes, but the Z axis would read a constant value. I did some light debugging, and learned, among other things, that the Z axis has slightly different internal circuity, but eventually I decided to put a pin in it and focus on other things.

Eventually I had to plug my drone into the battery that would power it in flight, and all of a sudden the magnetometer stopped working completely. I pivoted back to it in an effort to debug it, but nothing worked. I tried to reset the board, I tried to add the code to automatically reset and reinitialize the magnetometer if I hadn’t heard from it for a while, but no matter what I tried it would stay dead when the battery was plugged in.

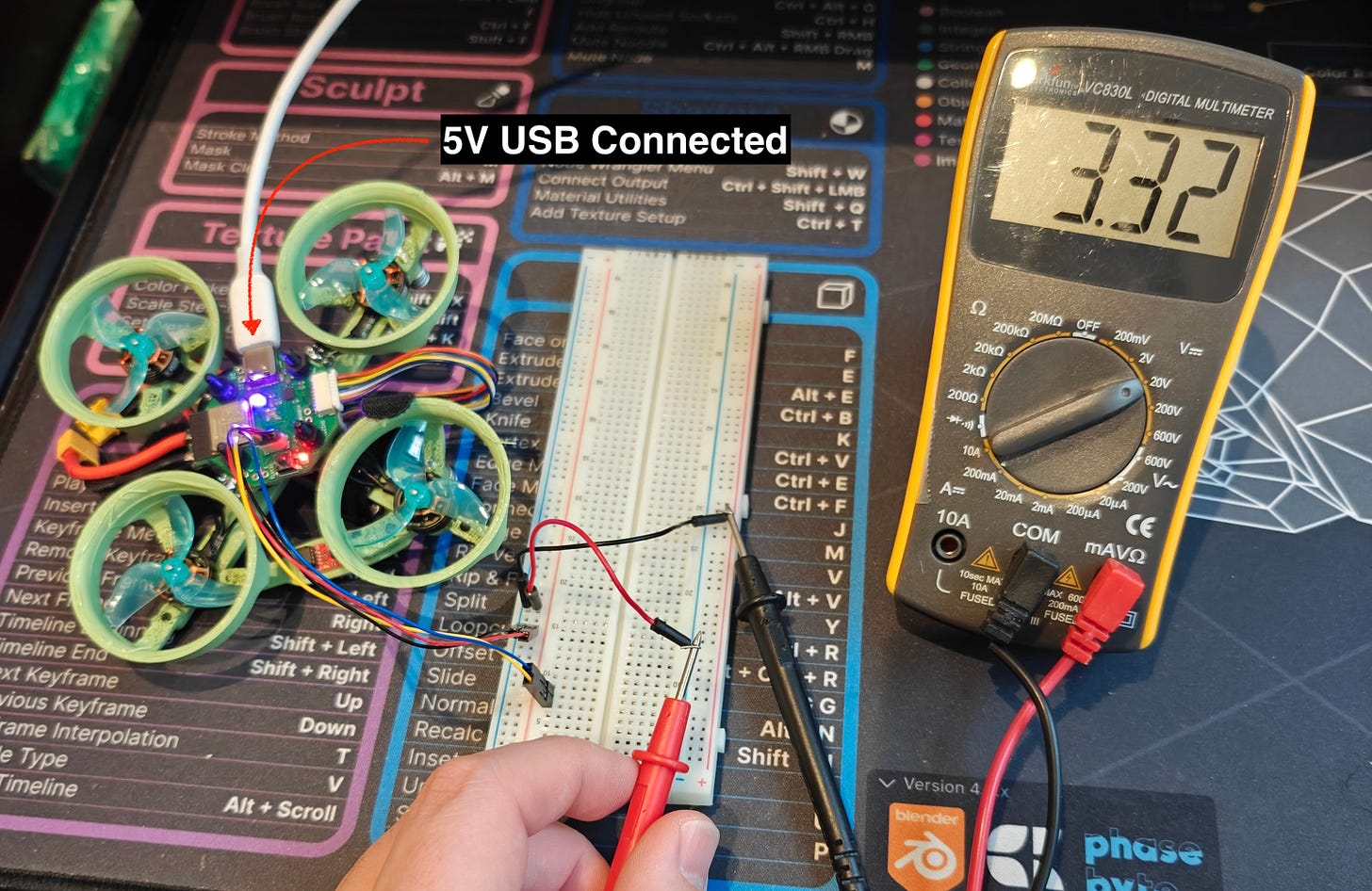

When I would unplug the battery and plug the USB back in, the magnetometer would recover and work just like it had before. So right away I have to start thinking about what the 3.3V line which powers the magnetometer looks like under USB vs battery.

[](https://substackcdn.com/image/fetch/$s_!dzaA!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F1fdad08c-fca6-4c68-92fd-93b50f74bfe3_3008x1955.jpeg)

A Qwiic connector gives me easy access to the 3.3V bus. Here you can see it’s reading a pretty steady 3.3V

[](https://substackcdn.com/image/fetch/$s_!EhRI!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F39ac8edd-23ea-4a97-a538-ce1ba0e68810_3008x2208.jpeg)

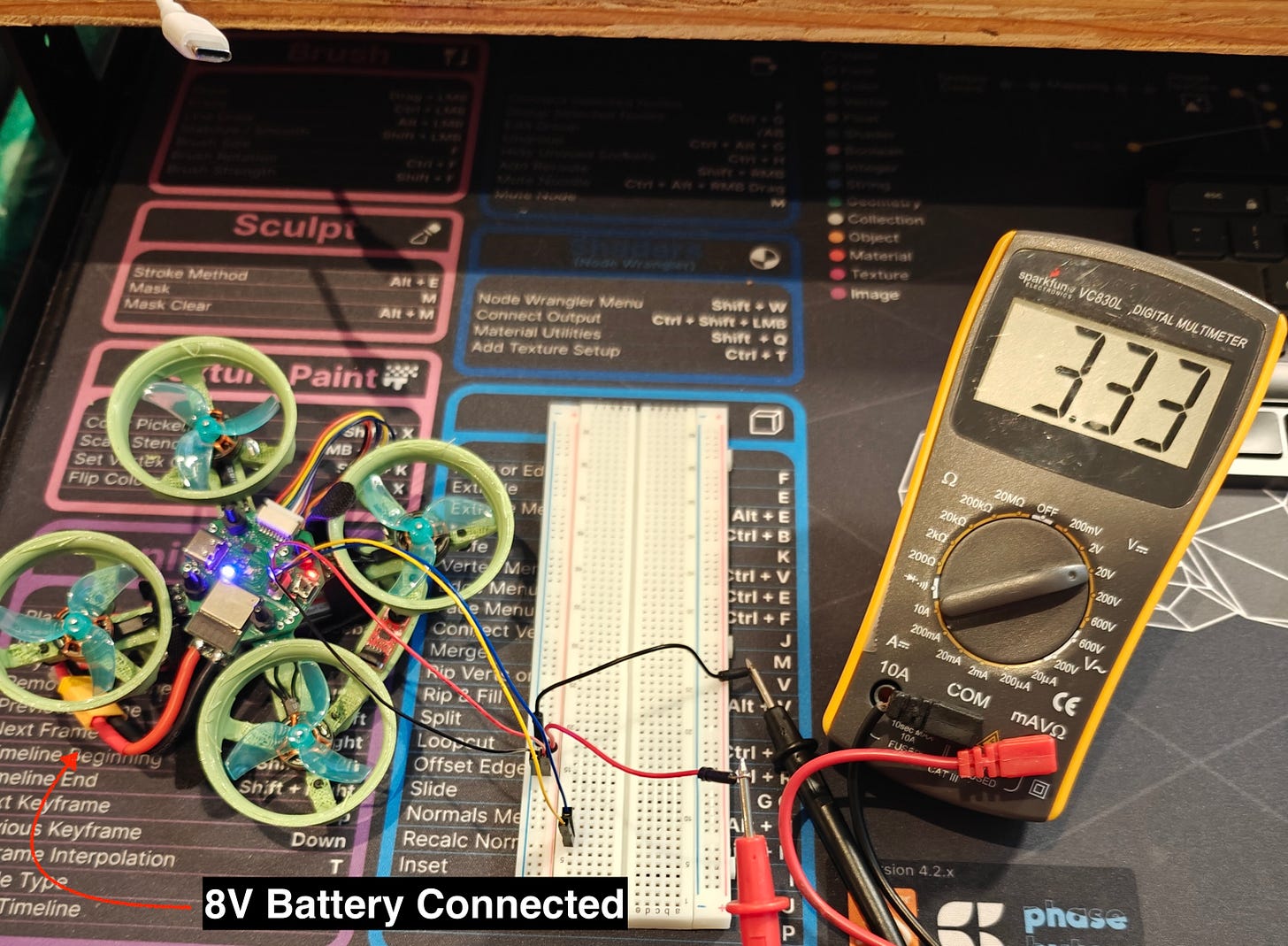

Under battery it similarly reads about 3.3V, and the reading on the multimeter is steady

So does that mean it’s not an issue with the 3.3V bus? Well, not exactly. The multimeter is going to give you a bulk reading, but it’s possible that the line is noisy even though the average reading is 3.3V. The way we get to 3.3V from the initial 5V/8V is through a [SY8113IADC](https://atta.szlcsc.com/upload/public/pdf/source/20200117/C479075_749CE19A0276D274B25CFED6D9E6F64F.pdf) voltage regulator. This is a modern switching regulator which is very efficient, but it achieves that efficiency by, you guessed it, rapidly switching the input supply on and off to effectively drop it down to the desired voltage (as opposed to the older kind of regulator which would just dissipate the energy as heat).

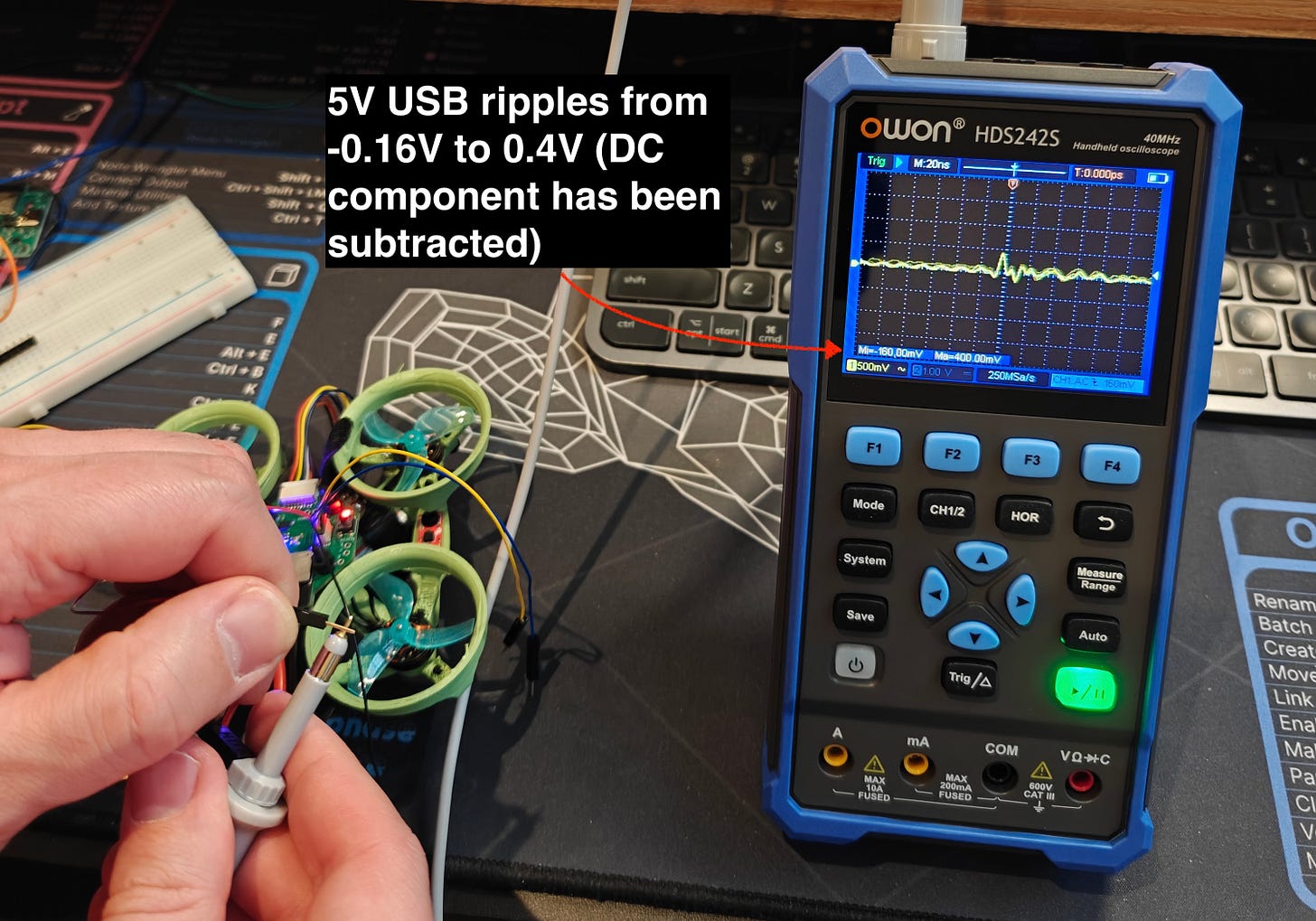

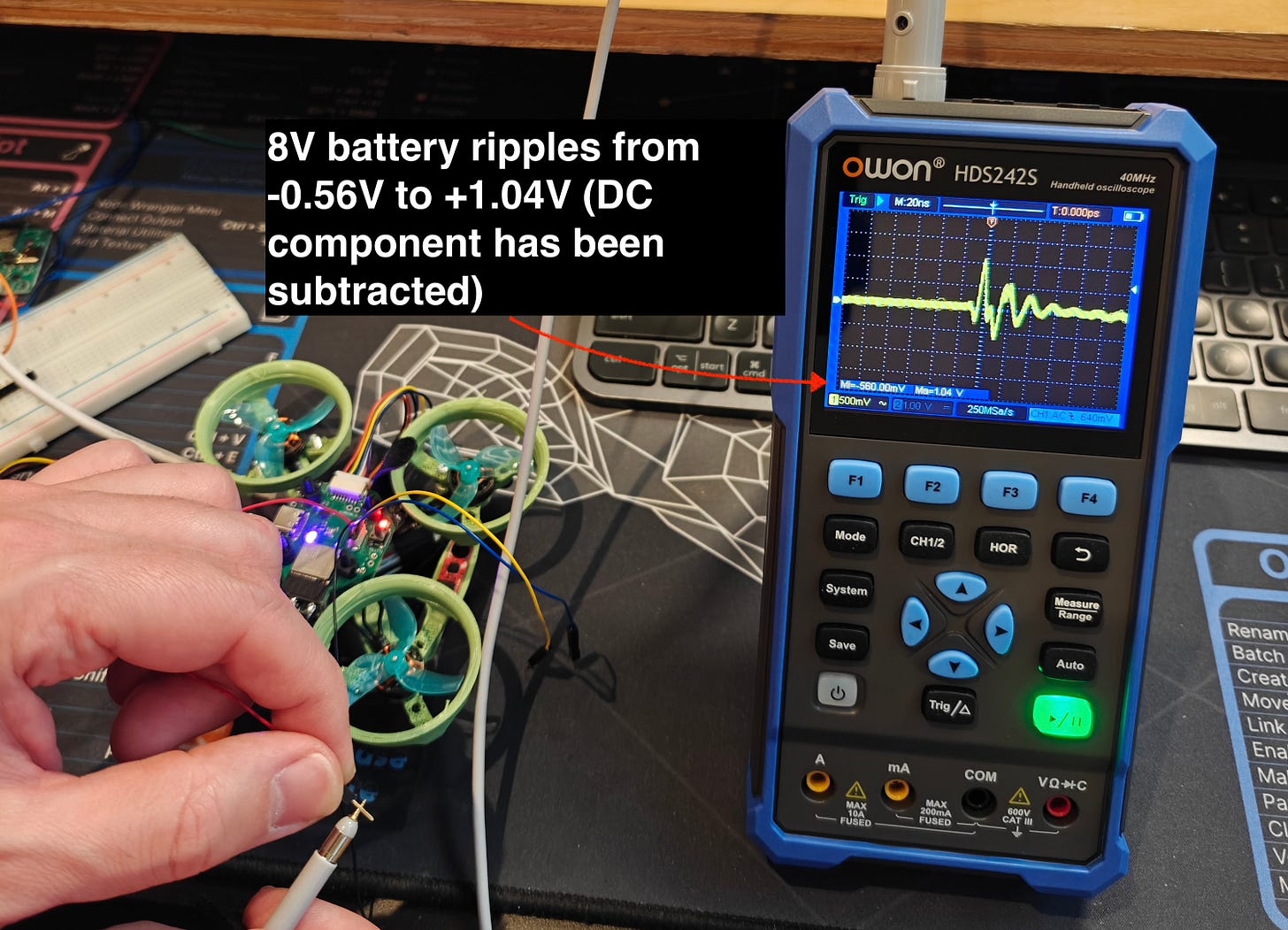

This switching causes ripples in the voltage line, and if we hook up an oscilloscope to the line we should be able to see these ripples in detail.

[](https://substackcdn.com/image/fetch/$s_!uEBh!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fb029c2d9-e430-4b53-bec1-8286cb22ddc8_3008x2105.jpeg)

This means the 3.3V line ranges from 3.14V to 3.7V. The BMM150 magnetometer is rated for up to 3.6V.

[](https://substackcdn.com/image/fetch/$s_!4RXf!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fb278eab3-c943-46b8-b180-7b3b0d4752d4_3008x2171.jpeg)

This means the 3.3V line ranges from 2.74V to 4.34V! This must be why the magnetometer simply won’t work on battery power

To get this magnetometer to work on this board, there’s sadly nothing I can really do. At best I could try to solder some tiny wires to the vias on the board near the magnetometer and use those to attach a capacitor between 3.3V and GND as close to the magnetometer as possible but a) it’s likely I’d cause some damage in trying to accomplish this and b) it seems like it would be a very fragile solution.

Fortunately since I had a Qwiic connector on the board, I was able to purchase a magnetometer with a Qwiic interface and attach it. I lose out a little bit since the BMM150 on the board directly interfaces with the BMI270 IMU and synchronizes its readings with the gyroscope/accelerometer readings, but this isn’t critical for what I’m trying to do.

So we can’t exactly fix this, but we can take this as an opportunity to see how a decoupling capacitor cleans up a noisy power signal. The general idea with a decoupling capacitor is that it absorbs high frequency noise in the power line. Take another look at the pictures of the ripples above and notice the “M: 20ns” in the top left corner. This signifies that each vertical dotted line is 20ns apart, so the ripple you see has a frequency of something like 50MHz. This is the sort of noise that a small capacitor right next to the voltage and ground pins of an IC like the BMM150 is supposed to handle, if you put one into your design of course 😅.

I think the most instructive thing is to see in real time how a signal gets cleaned up when you add a decoupling capacitor.

##### For this test I went back to an older version of the PCB. Here it’s powered by 5V USB.

##### And here the board is powered by the 8V battery.

I guess I can’t be sure that the lack of a decoupling capacitor is what kills the BMM150 when I go to battery power. After reviewing my schematic, I noticed I don’t have a decoupling cap for the BMI270, and that seems to work fine, despite also being rated up to 3.6V. That said it’s very clear from this experience that it’s good practice to add decoupling capacitors to all ICs on a board. One of the main reasons I’ve undertaken this project is to learn more about the world of PCBs and embedded engineering and so even though this was frustrating in the moment, it’s exactly the kind of mistake I was hoping to run into. I’ve definitely learned something from this experience and I hope you have as well!

No posts

---

## [HN-TITLE] 14. Show HN: AgentSwift – Open-source iOS builder agent

- **Source**: [https://github.com/hpennington/agentswift](https://github.com/hpennington/agentswift)

- **Site**: GitHub

- **Submitter**: hpen (Hacker News)

- **Submitted**: 2026-04-28 01:14 UTC (Hacker News)

- **HN activity**: 13 points · [4 comments](https://news.ycombinator.com/item?id=47929375)

- **Length**: 357 words (~2 min read)

- **Language**: en

[Download AgentSwift-0.1.zip](https://github.com/hpennington/agentswift/raw/refs/heads/main/AgentSwift-0.1.zip) You must install the dependencies listed below in order for the binary to work. See below for setup commands.

Dependencies:

- Xcode

- Xcode command line tools

- xcodebuildmcp

- openspec

[](https://github.com/hpennington/agentswift/blob/main/screenshot2.png)

[](https://github.com/hpennington/agentswift/blob/main/screenshot.png)

A native macOS app that runs an autonomous AI coding agent for Apple platform development. Describe what you want to build, and AgentSwift uses Claude to discover your project, implement changes, build, run, and validate — without you touching Xcode.

## What it does

[](#what-it-does)

AgentSwift drives a multi-phase agentic workflow:

1. **Discover** — Claude inspects your Xcode project structure and schemes

2. **Implement** — edits source files to match your request

3. **Build** — runs xcodebuildmcp to compile

4. **Launch / Validate** — boots the app on a simulator or macOS, runs UI automation to verify behavior

5. **Archive** — marks the task complete

## Requirements

[](#requirements)

- macOS 26.1+

- Xcode

- Node.js / npm

- An [Anthropic API key](https://console.anthropic.com)

## Dependencies

[](#dependencies)

Install these two CLIs before running the agent:

### xcodebuildmcp

[](#xcodebuildmcp)

Provides build, launch, and UI automation capabilities for Xcode projects.

```

npm install -g xcodebuildmcp

```

### openspec

[](#openspec)

Tracks implementation specs across agent sessions.

```

npm install -g @fission-ai/openspec

```

## Setup

[](#setup)

1. Build and run the app in Xcode.

2. Open **Settings** and enter your Anthropic API key.

3. Select a **Project Folder** (the root of your Xcode project).

4. Optionally pick an **iOS Simulator** from the dropdown.

5. Type what you want to build and press **Cmd+Return**.

On the first run the agent discovers your project's scheme and simulator target. Subsequent runs skip discovery and go straight to implementation.

## Models

[](#models)

Model Use when Claude Opus 4.7 Complex tasks, large codebases Claude Sonnet 4.6 Faster iteration, lighter tasks

## Key behaviors

[](#key-behaviors)

- **Message queuing** — if you send a new message while the agent is running, the latest supersedes earlier ones

- **Build caching** — scheme, project path, and simulator ID are extracted after the first build and reused automatically

- **Error escalation** — the agent attempts one fix on a failure, then surfaces the error to you rather than looping

## Architecture

[](#architecture)

```

AgentSwiftApp.swift — app entry point

ContentView.swift — UI, view models, agentic loop

AnthropicService.swift — Anthropic API client (streaming SSE)

ToolExecutor.swift — bash / read_file / write_file execution

Item.swift — chat message model

```

No external Swift dependencies — pure SwiftUI + Foundation.

---

## [HN-TITLE] 15. Radar Laboratory – Interactive Radar Phenomenology

- **Source**: [https://radarlaboratory.com/](https://radarlaboratory.com/)

- **Site**: radarlaboratory.com

- **Author**: Created and maintained by Hunter Bowden.

- **Submitted**: 2026-04-25 14:24 UTC (Hacker News)

- **HN activity**: 36 points · [0 comments](https://news.ycombinator.com/item?id=47901776)

- **Length**: 6.6K words (~29 min read)

- **Language**: en

RADAR LABORATORY QUICK REF · λ=c/f · R=cτ\_d/2 · ΔR=cτ/2 · f\_d=2v\_r/λ · v\_u=PRF·λ/4 · θ=0.886λ/D

THEORY REFERENCE

ALL KEY RADAR FORMULAS — ORGANIZED BY TOPIC — WITH DERIVATIONS AND CONTEXT

01 — Propagation & Frequency

Fundamental Wavelength Relation

The wavelength λ of an electromagnetic wave is inversely proportional to frequency. Every radar formula contains λ — choosing the operating frequency is the first and most consequential design decision.

λ = c / f c = 3×10⁸ m/s (speed of light) f = carrier frequency (Hz)

λ — wavelength (m) · f — frequency (Hz) · c — 3×10⁸ m/s

PROPAGATIONFUNDAMENTAL

Radar Band Designations

IEEE letter-band designations define standard operating ranges. Band choice determines resolution, attenuation, target interaction, and hardware constraints.

L-band: 1–2 GHz λ ≈ 15–30 cm S-band: 2–4 GHz λ ≈ 7.5–15 cm C-band: 4–8 GHz λ ≈ 3.75–7.5 cm X-band: 8–12 GHz λ ≈ 2.5–3.75 cm Ku-band: 12–18 GHz λ ≈ 1.7–2.5 cm Ka-band: 26–40 GHz λ ≈ 0.75–1.15 cm

BANDSPROPAGATION

Atmospheric Absorption

Water vapor (H₂O) peaks at 22 GHz (~0.18 dB/km) and 183 GHz. Oxygen (O₂) dominates at 60 GHz (~15 dB/km) and 119 GHz. Atmospheric windows at 35, 77, and 94 GHz are exploited by automotive and military radars.

L\_atm (dB) = α(f) × R\_km Two-way loss = 2 × α × R\_km α: dB/km (frequency-dependent)

α — specific attenuation (dB/km) · R\_km — one-way range (km)

PROPAGATIONLOSSES

02 — Range Measurement

Range from Echo Delay

Radar times the two-way travel of a pulse. The round-trip delay τ\_d gives range exactly. Electromagnetic waves travel at c = 3×10⁸ m/s ≈ 150 m/μs (one-way).

R = c · τ\_d / 2 1 μs delay → R = 150 m

τ\_d — round-trip delay (s) · c — 3×10⁸ m/s

RANGEFUNDAMENTAL

Maximum Unambiguous Range

The radar must receive the previous pulse's echo before firing again. If the PRI is too short, a distant echo arrives after the next transmission and is reported at a false closer range.

R\_u = c / (2 · PRF) PRI = 1 / PRF (pulse repetition interval) R\_app = R\_true mod R\_u (folded range)

PRF — pulse repetition frequency (Hz) · PRI — 1/PRF (s)

RANGEAMBIGUITY

03 — Range Resolution & Pulse Compression

Pulse Width Resolution

Two targets closer than ΔR cannot be separated — their echoes overlap in the receiver. The matched filter output width equals cτ/2, which is why resolution and pulse duration are the same formula.

ΔR = c · τ / 2 τ = 1 μs → ΔR = 150 m τ = 10 ns → ΔR = 1.5 m

τ — pulse width (s) · ΔR — minimum resolvable separation (m)

RESOLUTION

Pulse Compression (LFM Chirp)

A chirp sweeps frequency across bandwidth B during pulse duration T. The matched filter compresses the pulse to width 1/B, independent of T. This breaks the energy–resolution trade-off.

ΔR\_compressed = c / (2B) Compression gain: G\_c = B·T Peak sidelobes: −13.2 dB (rect window) −42.7 dB (Hamming window)

B — chirp bandwidth (Hz) · T — pulse duration (s)

PULSE COMPRESSIONLFM

Matched Filter SNR

The matched filter is optimal — it maximizes SNR for any given waveform. The output SNR depends only on the signal energy E and noise spectral density N₀, not on pulse shape.

SNR\_out = 2E / N₀ E = Pt · τ (pulse energy) N₀ = k\_B · T\_sys · F (noise density)

E — signal energy (J) · N₀ — noise spectral density (W/Hz)

MATCHED FILTERSNR

04 — Doppler & Velocity

Doppler Frequency Shift

A moving target compresses (approaching) or stretches (receding) the reflected wavefront, shifting the echo frequency by f\_d. Positive Doppler = closing, negative = opening.

f\_d = 2 · v\_r / λ = 2 · v\_r · f\_c / c v\_r = f\_d · λ / 2 (velocity from Doppler) v\_r = v · cos(θ) (radial component)

v\_r — radial velocity (m/s) · θ — angle from boresight

DOPPLERVELOCITY

Maximum Unambiguous Velocity